TL;DR

- Intent analysis classifies customer feedback based on what the customer wants to happen next, not just how they feel. Sentiment says positive or negative. Intent says advocacy, feature request, question, complaint, or escalation.

- Zonka Feedback's analysis of 1M+ responses found that 23% contain clear intent signals. Each type routes to a different team: advocacy to marketing, feature requests to product, complaints to support, escalations to management.

- Intent has a short shelf life. An advocacy signal detected and routed within 24 hours becomes a testimonial. The same signal found in a quarterly report is a missed opportunity.

- Most feedback programs stop at sentiment. Intent analysis goes further: it turns every response into a routing decision with a named owner, a defined action, and a timeline.

- When intent detection combines with entity recognition and experience quality signals, routing becomes fully contextual: a complaint about a specific agent with high urgency goes to that agent's manager immediately.

A customer writes "I've recommended you to three colleagues already." Another writes "I want to speak to a manager." A third writes "It would be great if you added a Slack integration."

All three are feedback. All three might score similarly on a satisfaction scale. But each one contains a completely different signal about what should happen next: the first is an advocacy opportunity for marketing, the second is an escalation for management, and the third is a feature request for the product team.

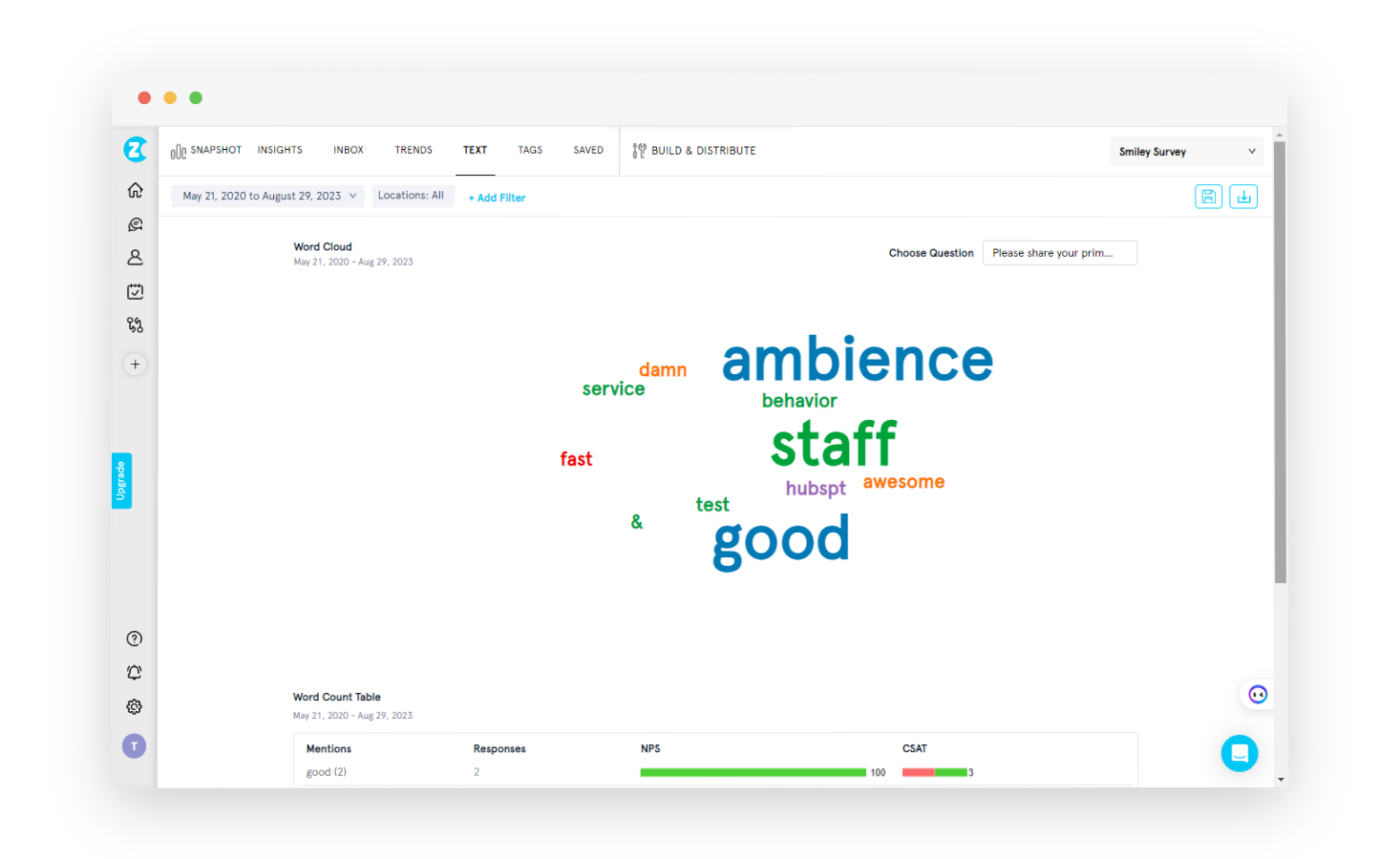

That's what intent analysis captures: not the feeling behind feedback, but the forward-looking signal about what the customer expects. Our analysis of over one million feedback responses found that nearly one-quarter contain clear intent signals. Most go unrouted because the analysis stops at sentiment.

When we designed the Feedback Intelligence Framework, we made customer intent the second sub-pillar alongside experience quality: not an afterthought, not a "nice to have." Intent is what turns feedback from data into a work queue. This guide covers what intent analysis is, how the five intent types map to team routing, what changes when intent combines with other signals, and how to apply intent analysis across industries.

What Is Intent Analysis in Customer Feedback?

Intent analysis is the process of classifying customer feedback based on what the customer wants to happen next. It answers a different question than sentiment analysis: sentiment tells you how the customer feels (positive, negative, mixed, neutral). Intent tells you what they expect (action, acknowledgment, resolution, escalation).

Microsoft's work on intent-based routing in Dynamics 365 demonstrated the operational impact: when a system recognizes customer intent in real time, it can route the interaction to the right resource without requiring manual triage. The concept translates directly to feedback analysis. In simple terms, intent analysis turns every piece of feedback into a routing decision: who needs to see this, and what should they do with it?

The distinction matters because most feedback analysis stops at understanding. Thematic analysis tells you WHAT customers are talking about. Experience quality signals tell you HOW the experience felt. Intent tells you WHY the customer communicated and what they expect as a result. Without intent classification, feedback is an archive. With it, feedback is a work queue.

Intent sits within the Feedback Intelligence Framework as the second sub-pillar of experience signals, alongside experience quality. Both are detected at the response level AND the individual theme level. That dual detection is what makes routing precise: the same response can carry advocacy intent on one theme and complaint intent on another.

5 Customer Intent Types and Where Each One Routes

Customer intent in feedback clusters into five types. Each one points to a different team, a different action, and a different timeline. And each one carries a shelf life that determines how quickly you need to route and act on it. Miss the window, and the signal loses its value: an advocacy opportunity becomes a data point, an escalation becomes a churned customer. In the Feedback Intelligence Framework, intent is one of the two sub-pillars of experience signals. Here's what each type looks like in practice and what happens when they go undetected.

1. Advocacy → Marketing

"I've told all my friends about you." "Absolutely love this product." "Would definitely recommend." "Best customer service I've ever had."

Advocacy intent signals that the customer is actively promoting your brand. These are your testimonial candidates, your referral sources, your case study leads. Marketing teams can convert advocacy signals into social proof, referral program invitations, and review requests. The catch: advocacy intent has a short shelf life. A customer who felt strongly enough to write "best experience I've had" today won't feel the same urgency to participate in a case study three months later. Detection and routing need to happen within days, not quarters.

When advocacy intent goes undetected, the most enthusiastic customers become invisible. Their positive energy dissipates into a satisfaction score that nobody acts on.

2. Feature Request → Product

"I wish you had..." "It would be great if..." "Can you add..." "The one thing missing is..." "Your competitor offers X and I'd love to see it here."

Feature request intent signals that the customer is invested enough in the product to describe what they'd improve. This is direct roadmap intelligence from the people who use the product daily. When 50 different customers express feature request intent around the same capability, that's a demand signal that internal prioritization frameworks (RICE, MoSCoW) should incorporate alongside their own estimates.

Product teams that receive feature request signals in real time can validate demand before committing engineering resources. Product teams that discover the same requests in a quarterly review are three months behind the customer's timeline. The 23% stat from Zonka Feedback's research matters here: in a dataset of 2,000 monthly responses, roughly 460 contain intent signals. A meaningful portion of those will be feature requests that the product team never sees if they're buried in a general feedback dashboard.

Feature request intent also carries competitive intelligence when combined with entity recognition. A request that says "Your competitor offers X" doesn't just tell the product team what to build. It tells them why the customer is looking elsewhere and which competitor is setting the expectation. That's two signals (feature request + competitor entity) from a single sentence, routed to both the product team and the competitive intelligence function.

3. Question → Support / Knowledge Base

"How do I...?" "Where can I find...?" "Is it possible to...?" "I'm not sure how to..."

Question intent signals that the customer needs help but hasn't necessarily had a negative experience. They're looking for information, not resolution. This intent type is valuable for two reasons: first, it routes to support or self-service teams who can respond before frustration builds. Second, patterns of question intent around the same topic reveal knowledge base gaps, documentation deficiencies, or UX confusion that should be fixed at the source.

If 40 customers in a month ask "How do I export my data?", that's not 40 support tickets to answer. That's one documentation fix that eliminates the need for the next 40.

4. Complaint → Support / Operations

"This is unacceptable." "Still not resolved." "Extremely disappointed." "I've been dealing with this for weeks."

Complaint intent is what most teams assume all negative feedback is. But not all negative feedback is a complaint. A low CSAT score with a comment about "room for improvement" is negative sentiment without complaint intent. A response that says "This is the third time this has happened and nobody has fixed it" is a complaint: the customer expects remediation.

Routing complaint intent to the right operations or support lead, with the associated theme and entity context, means the responding team knows what the complaint is about, who it involves, and how urgent it is before they open the ticket. Without intent classification, complaints sit in the same queue as questions, feature requests, and advocacy signals, all waiting for someone to manually read and sort them.

5. Escalation → Management

"I want to speak to a manager." "I'm going to take this further." "This needs to be escalated." "I'll be contacting your CEO." "I'm reporting this to the regulator."

Escalation intent is the highest-urgency classification. The customer isn't just complaining. They're signaling that the current resolution path has failed and they're moving up the chain. This intent type requires immediate human intervention: not a template response, not a workflow, not a queue. It requires someone with authority seeing the feedback within hours, ideally minutes.

Escalation intent is also the signal most likely to co-occur with high effort and high urgency from the experience quality layer. A customer who escalates has typically already experienced repetition, channel-switching, or resignation-level friction. The escalation is the last signal before the relationship breaks.

Don't believe us? One CX leader in financial services put it directly during Zonka Feedback's research conversations: "It's not enough to know what the customer said. You need to track what action was taken." Escalation intent is the signal where that principle matters most.

| Intent Type | Routes To | Example Phrases | Action Window |

| Advocacy | Marketing | "Would definitely recommend," "Told my friends" | Days (shelf life of enthusiasm) |

| Feature Request | Product | "I wish you had," "Can you add" | Weeks (aggregate before acting) |

| Question | Support / KB | "How do I," "Where can I find" | Hours (before frustration builds) |

| Complaint | Support / Ops | "Unacceptable," "Still not resolved" | Hours (recovery window) |

| Escalation | Management | "Speak to a manager," "Taking this further" | Minutes (relationship at risk) |

How Intent Analysis Differs from Sentiment Analysis

Sentiment and intent answer different questions about the same feedback. They overlap in some cases, but they're not interchangeable, and treating them as if they are leads to misrouting.

Consider two positive responses:

Response A: "Love the product, would recommend it to anyone." Sentiment: positive. Intent: advocacy. Action: route to marketing for testimonial outreach.

Response B: "Love the product, but I really wish you'd add dark mode." Sentiment: positive. Intent: feature request. Action: route to product for roadmap consideration.

Same sentiment. Completely different routing. If your system only detects sentiment, both responses end up in the "positive" bucket and neither generates the specific action it deserves.

Now consider two negative responses:

Response C: "I'm confused about how billing works." Sentiment: negative. Intent: question. Action: route to support or KB team for clarification.

Response D: "I want to speak to your manager immediately." Sentiment: negative. Intent: escalation. Action: route to management with priority flag.

Same sentiment polarity. One needs a knowledge base article. The other needs a phone call from a senior leader. Sentiment analysis puts them in the same bucket. Intent classification puts them in front of the right person. In simple terms, sentiment answers "how do they feel?" Intent answers "what do they need?"

What Intent Analysis Reveals Across Industries

Intent isn't always obvious. Most of the time, it shows up in quiet cues: a comment that sounds polite but hides frustration, a feedback note that feels neutral but carries urgency. Recognizing these patterns differs by industry.

Retail: Decoding Drop-Off and Purchase Friction

Your website is full of signals. A customer searches for a product, adds it to cart, and then leaves. A post-exit survey says: "I loved the design but wasn't sure it would fit my laptop." That's a feature request intent disguised as a neutral comment: the customer wants better product information.

At the checkout, "promo code didn't work" is complaint intent. Post-purchase, "the quality exceeded expectations, I've already told my team about it" is advocacy intent that should reach marketing within days, not sit in a CSAT spreadsheet.

Wondering how these signals map to team routing? Apply the five intent types to your last month of feedback. Most retail teams find that question intent (sizing, shipping, returns) dominates: each one is a documentation fix that could prevent the next 40 tickets.

Healthcare: Uncovering Operational Friction

Patients might not always say outright what went wrong. "The doctor was fantastic, but I had to call twice to reschedule" contains two intents: advocacy (the doctor) and complaint (the scheduling process). Without intent classification, both signals disappear into a single "mixed" sentiment score.

In healthcare, question intent around billing, insurance coordination, and follow-up instructions often indicates confusion that can affect patient outcomes, not just satisfaction scores. A patient saying "I couldn't understand the discharge summary" isn't just confused: they're likely feeling anxious, unsupported, and at risk of a poor outcome. That's question intent with an urgency dimension that should route to the clinical communication team, not sit in a general satisfaction report.

Routing those signals to administrative teams instead of clinical teams (or vice versa, when the intent demands it) is the kind of precision intent analysis enables.

Financial Services: Fixing Confusion Before It Becomes Lost Revenue

Financial products are complex, and many customers won't complain: they'll just disappear. "I wasn't sure if I qualified" reads as neutral. It's actually hesitation intent, and if it's a pattern across your application flow, it's costing you conversions.

Complaint intent in financial services often co-occurs with effort signals: "Filed a dispute three weeks ago, still no update" carries both complaint intent and duration-type effort. Routing that to the disputes team with the effort context attached means they know the customer isn't just unhappy: they're exhausted.

Intent in Action: What Each Type Looks Like and What to Do

Understanding intent types is one thing. Knowing what to do when you spot them is what makes the analysis operational. Here are five common intent signals from real feedback, each with a concrete response playbook.

Each of these is a playbook item — a way to turn vague complaints or scattered praise into targeted CX improvements. Here are some commonly seen intents behind customer feedback especially useful for the retail industry and how you can take action.

1. Intent: Praise "This jacket is fantastic! The quality is amazing, and it fits perfectly. I'll definitely be recommending your brand to my friends."

Action: You can:

1. Intent: Advocacy "This jacket is fantastic! The quality is amazing, and it fits perfectly. I'll definitely be recommending your brand to my friends."

Action: You can:

- Publicly acknowledge the positive feedback (respond to the review, share it on social media).

- Offer the customer a discount or loyalty program incentive.

- Use the feedback as a testimonial on your website or marketing materials.

The window is days: this customer's enthusiasm is at its peak right now. Advocacy intent detected and routed within 24 hours becomes a testimonial. Found in a quarterly report, it's a data point.

2. Intent: Complaint "The delivery was very slow. It took over a week for my order to arrive."

Action: You can:

- Issue an apology and offer a solution, such as expedited shipping on future orders.

- Investigate the cause of the slow delivery and implement corrective measures.

- Provide clear communication regarding shipping times on the website.

If this complaint clusters around the same logistics partner or region, it's not a one-off. It's a systemic issue your operations team should be seeing in trend data.

3. Intent: Feature Request "The website could be improved by adding a live chat feature for customer support."

Action: You can:

- Log the request in your product backlog with the source tagged.

- Acknowledge the suggestion and explain any potential challenges or timelines for implementation.

If this is the 50th time someone's asked for live chat, that's a demand signal your product team should be seeing alongside their own prioritization estimates.

4. Intent: Question "I'm not sure which size to order. Can you provide a size guide?"

Action: You can:

- Develop a detailed size guide with measurements and fit recommendations.

- Offer a customer service representative to answer sizing questions through chat or phone.

This is a documentation gap, not a support ticket. Every question intent signal that clusters around the same topic is a self-service fix that eliminates the next 40 tickets on that subject.

5. Intent: Confusion "The return policy is very confusing. It's difficult to understand what items are eligible for return."

Action: You can:

- Simplify the return policy and clearly outline the terms and conditions on the website.

- Offer a dedicated FAQ section addressing common return-related questions.

Confusion intent is a UX signal: the information exists, but customers can't find it or can't understand it. Fixing confusion at the source reduces support volume and builds trust.

Intent-Based Routing: From Detection to Team Assignment

Intent detection without routing is analysis. Intent detection with routing is a workflow. Here's how the pipeline works from signal to action.

Step 1: Feedback arrives. From any source: NPS survey, CSAT comment, support ticket, Google review, social media mention, in-app feedback widget. The source doesn't change the intent classification process. The language carries the signal regardless of channel.

Step 2: AI classifies intent. The system analyzes the text and assigns one or more intent types. A single response can carry multiple intents: "I love the product (advocacy) but the mobile app needs offline mode (feature request)." Both intents are tagged.

Step 3: Routing rules map intent to teams. Each organization configures which team receives which intent type. Advocacy goes to marketing. Feature requests go to product. Complaints go to the support lead responsible for the relevant product area or location. These rules are configurable because organizational structures vary, but the intent types are universal.

Step 4: Auto-assignment with full context. The routed feedback arrives with more than just the text. It carries the classified intent, the detected themes, the experience quality signals (sentiment, effort, urgency, churn, emotion), and the mapped entities (which agent, location, product, or competitor is mentioned). The receiving team doesn't start from zero. They start with a structured signal.

Step 5: Follow-up tracked. Did the team act? Was the loop closed? Did the customer's next interaction reflect improvement? Closed-loop tracking is where intent detection connects to retention and revenue: it's not enough to route the signal if nobody measures whether the routing led to action.

Zonka Feedback classifies all five intent types and routes feedback automatically, because that's how we built the system. In simple terms, the routing happens at the moment feedback arrives, with the full context of themes, signals, and entities attached. No human reads, categorizes, and forwards. The framework handles the classification; the routing rules handle the distribution.

When Intent Combines with Other Signals: Compound Routing

Intent classification is most powerful when it doesn't work alone. In the Feedback Intelligence Framework, intent is one component of the experience signals pillar, which also includes sentiment, effort, urgency, churn risk, and emotion. When intent combines with these signals and with entity recognition, routing becomes compound: which team, with what priority, about what specifically.

Here are three examples of compound routing that single-signal systems can't replicate:

Complaint + high urgency + staff entity: "The agent was rude and I need this fixed before my renewal on Friday." This routes to the agent's manager (entity), with priority flagging (urgency), as a complaint (intent). Three signals, one routing decision, one person who sees it within the hour.

Feature request + competitor entity + churn signal: "I love using your product but we're testing Competitor X because you don't have a Slack integration." This routes to both the product team (feature request intent) and the account manager (churn risk), with the competitor name tagged as a switching trigger (entity). The product team sees demand. The account manager sees risk. Neither would have seen both from sentiment alone.

Advocacy + location entity + emotion (delight): "The downtown branch team made our event perfect. I've already told five colleagues." This routes to marketing (advocacy intent) and to the branch manager (location entity) for recognition. The delight emotion confirms the signal strength. Marketing gets a case study lead. The branch gets a win to share with staff.

Without intent classification, all three of these responses would land in a generic feedback queue. With it, each generates a specific, contextual action with a named owner and a clear timeline.

Why Most Feedback Routing Fails Without Intent Analysis

Most CX programs have a routing problem they don't recognize as one. Feedback arrives, gets stored, and eventually gets reviewed. But the routing, the question of who sees which feedback and when, is either manual, absent, or based solely on the channel it arrived through: survey responses go to the CX team, tickets go to support, reviews go to marketing.

Channel-based routing misses what intent-based routing catches: that a survey response can contain a feature request meant for the product team, that a support ticket can contain advocacy language meant for marketing, and that a Google review can contain an escalation signal that needs management attention today.

Zonka Feedback's AI in Feedback Analytics 2025 report found that 66% of CX leaders report slow or missing feedback-action loops, largely because of disconnected systems and manual follow-ups. In simple terms, intent analysis doesn't fix every part of that gap, but it fixes the most expensive one: the delay between a signal arriving and the right person seeing it. When that delay shrinks from weeks to hours, recovery rates increase, advocacy gets captured, and feature intelligence reaches the product team while it's still relevant. Intent signals also feed directly into the feedback prioritization matrix, where escalation and complaint themes with worsening trends get auto-assigned to the "Fix Now" quadrant.

Can You Run Intent Analysis with ChatGPT?

Yes, partially. If you paste customer feedback into ChatGPT or Claude with a structured prompt based on the Feedback Intelligence Framework that asks for the five intent types, the results are surprisingly accurate for individual responses. The AI will classify "I've told all my friends" as advocacy and "I want to speak to a manager" as escalation.

The limitation isn't quality. It's persistence. ChatGPT can't track intent patterns across sessions. It doesn't remember that escalation signals spiked 40% last month or that feature requests for a Slack integration have come from 12 different customers. It can't auto-route classified intent to the right team. And it processes roughly 50 responses per session before context degrades.

For teams analyzing small volumes or testing the framework before committing to a platform, framework-based prompting in ChatGPT is a legitimate starting point. For teams processing hundreds or thousands of responses monthly and needing trend data, entity mapping, and automated routing, a purpose-built system handles what session-based tools can't.

Best Practices for Intent Analysis in CX

Start with the feedback you already have. Map the feedback you already collect across touchpoints: checkout, onboarding, support follow-ups. Label them by journey stage, not channel. Understanding where intent surfaces helps you interpret it more accurately.

Prioritize signals that carry business consequences. Escalation and churn signals demand hours, not weeks. Feature requests can aggregate over weeks before you act. Advocacy signals have a shelf life of days. Build your response cadence around the action window for each intent type.

Look for patterns, not isolated comments. One complaint is an opinion. Ten similar complaints are a pattern. Use classification to group recurring intents by location, product, or customer segment. That's how you find systemic CX gaps rather than one-off issues.

Align intent categories across teams. Define a consistent set of intent types and use them across support, product, and marketing. When everyone uses the same classification, routing becomes automatic and there's no confusion about who owns which signal.

Act while intent is still relevant. A follow-up delayed by days can turn a potential advocate into a detractor. Build processes to detect and route critical intent fast, especially at high-friction or high-churn moments in the journey.

Close the loop. Surface the insight, route it, act on it, and let the customer know. Visibility into follow-through builds trust and justifies collecting feedback in the first place.

From Signals to Action: Making Intent Analysis Work for Your Team

Intent analysis is the signal layer that turns feedback from a reporting exercise into an operational workflow. The quality of your analysis doesn't matter if the insight doesn't reach the right person at the right time. Intent classification is what makes that routing possible: five types, five destinations, five different action windows.

The shift from sentiment-only analysis to intent-aware routing changes what feedback programs can do. Advocacy signals reach marketing while enthusiasm is fresh. Feature requests reach product teams while demand is building. Complaints reach the right operations lead with the entity context and urgency level attached. Escalations reach management within minutes, not days. Every signal has a home, and every home has a defined response.

If you want to see the gap in your own data, try this: take your last week of feedback and tag each response with one of the five intent types. Then ask two questions: which of those responses actually reached the team that should have acted on it? And how many days passed between the signal arriving and someone seeing it? For most organizations, the answer to the first question is fewer than half, and the answer to the second is "too many."

That's the routing gap intent analysis closes. The teams that detect intent at the moment feedback arrives are the ones turning customers into advocates, feature requests into roadmap wins, and complaints into recovery stories. Intent has a shelf life. The signal is strongest when it's fresh. The organizations that act on it fastest are the ones building CX programs that compound, not just report.

See how intent routing works in Zonka Feedback → or book a walkthrough